Instructions:

- Create any PNG image file of size 8x8. Name it whatever you want. (This example will use “ship.png” as the file name.) The first row must be entirely filled with transparent color.

- Create an empty Java project.

- Create a class folder in your project.

- Put your PNG image in the class folder, and set the build path of your project to reference the class folder.

- Copy the following code:

public static void main(String[] arg) {

int pixels[] = new int[64];

try {

BufferedImage img = ImageIO.read(Art.class.getResourceAsStream("/ship.png"));

pixels = img.getRGB(0, 0, 8, 8, pixels, 0, 0);

}

catch (IOException e) {

e.printStackTrace();

}

}

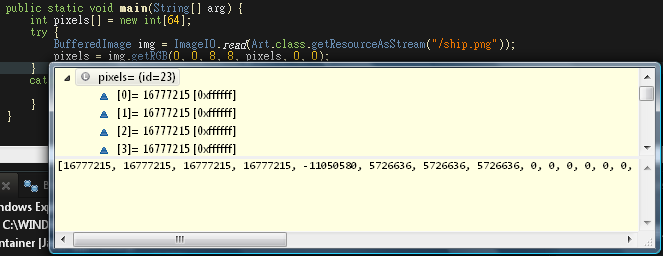

- Check the pixels array. You can see that it fills up only the first 8 elements. The rest of the array is NULL.

I don’t get exactly why this line of code:

[icode]pixels = img.getRGB(0, 0, 8, 8, pixels, 0, 0);[/icode]

returns BufferedImage.TYPE_3BYTE_RGB, and not what the documentation says BufferedImage.TYPE_INT_ARGB. If I were to cast the data buffer that I obtain from:

[icode]pixels = ((DataBufferInt) img.getRaster().getDataBuffer()).getData();[/icode]

It would always return DataBufferByte and not DataBufferInt, because of the above problem.

Can anyone solve this mystery?

EDIT:

Image proof:

The ship.png: